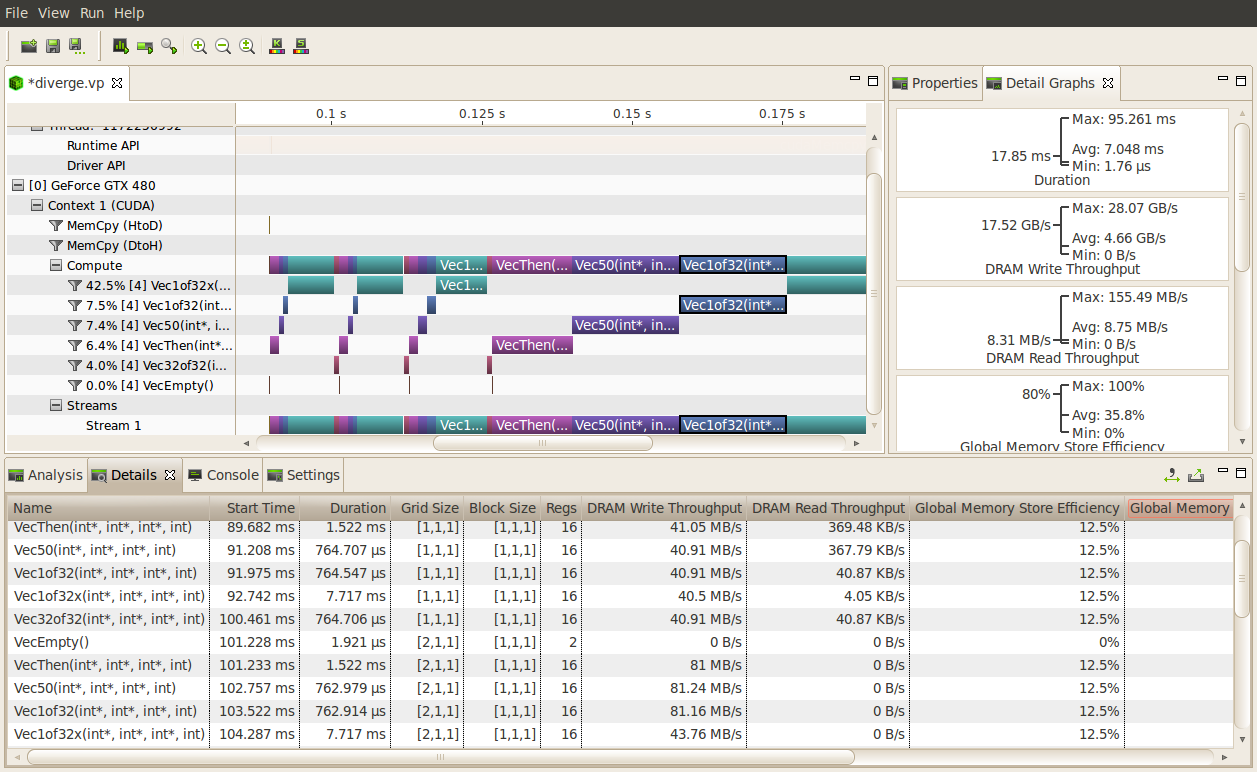

(the example is for an n-body physics problem simulator), your output will include. Specifically, if you write something like: nvprof -print-gpu-trace. It walks you through some basics of how to use nvprof to profile and time your application. For example, to load the CUDA compiler version 10. CUDA Pro Tip: nvprof is Your Handy Universal GPU Profiler. My current setup involves making arbitrarily sized transfers and measuring: the time it takes to complete using both the host timer and CUDA Events and parsing the output of an API trace using. Use module load cuda/version to choose a version. Hi all, I am trying to assess the efficiency of host to device (and back again) memory transfers using the different transfer options available (cudaMemcpy, cudaMemcpyAsync, and cudaMallocManaged). For a comprehensive list of Cuda modules, run module -r spider '.*cuda.*'. NVPROF is part of the CUDA package, so run module avail cuda to see what versions are currently available with the compiler and MPImodules you have loaded. (that is as soon as grep returns ~]$ while do sleep 5 doneīefore you start profiling with NVPROF, the appropriate module needs to be loaded. When your job starts, DCGM will eventually stop running in the following minute.įor convenience, the following loop awaits until the monitoring service has stopped Needs to be disabled, and this must be done while doing your job ~]$ DISABLE_DCGM=1 salloc -gres=gpu:1. Then visualize timeline with Visual Profiler.(my program is using openmp multithreads) I’m seeing that cudaLaunch takes 280 ns (min) and 58ms (max). Heavy traffic here will incur performance penalties in CUDA kernels, so consider using manual cudaMemcpy operations. First use Nvprof on headless node to collect data I’m profiling my CUDA program, using nvprof. With the CUDA Toolkit, you can develop, optimize, and deploy your applications on GPU-accelerated embedded systems, desktop workstations, enterprise data centers, cloud-based platforms and HPC supercomputers. Data is displayed in the Managed Memory area of the timeline: HtoD transfer indicates the CUDA kernel accessed managed memory that was residing on the host, so the kernel execution paused and transferred the data to the device.

You can use memoryallocated() and maxmemoryallocated() to monitor memory occupied by tensors, and use memoryreserved() and maxmemoryreserved() to monitor the total amount of memory managed by the caching allocator.

Nvprof also features a headless profile collection with the help of the Nvidia Visual Profiler: However, the unused memory managed by the allocator will still show as if used in nvidia-smi. It is capable of providing a textual report :

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed